Search Google for “empathy + technology” and you’ll read that “Studies have shown that increased dependence on technology has resulted in the diminishing of empathy” and “The Internet desensitizes us to shocking images, diminishing our empathy.” Meanwhile, narcissism (think selfies) and cyberbullying appear to be at all time highs. And reality television is thriving on voyeuristic depictions of human weakness, competition, and cruelty.

A definition of empathy:

The feeling that you understand and share another person’s experiences and emotions. (Merriam-Webster)

Are we losing touch with one another? Are we sinking towards something like Roman civilization, when bloodthirsty spectators eagerly watched men fight to the death in the name of entertainment, now just on high-def screens?

Or could empathy in society actually be enhanced by the capabilities of technology? Could machines sense our emotions better than our friends and family can and broadcast that data to them? It’s not a crazy idea. In fact, wearable technologies are starting to emerge that are specifically designed to give viewers a sense of what’s going on inside another person. They may be crude now, but they will get better.

Take a look at the Necomimi product from Tokyo’s Neurowear. It’s a set of brainwave-reading cat ears that perk up when the user is alert or excited and lay flat when the user is calm. In its concept video, a boy-meets-girl-with-cat-ears story plays out: Boy approaches; girl’s prosthetic EEG-enabled cat ears stand up; girl blushes; boy gets closer.

It is simultaneously ridiculous, cute, and relatable. Holding in feelings of affection is so utterly human. Necomimi takes reservation out of the equation. The wearer implicitly creates a new social contract when putting on the headset: anything that excites or bores the wearer will be plainly obvious to observers, be it an advertisement or a married man. It may not be for you, but some people in Japan and elsewhere are using it.

The GER Mood Sweater from the American company Sensoree relies on galvanic skin response and LED-laden fabric to change color with the wearer’s mood. And Heart Spark, a DIY heart-rate monitor necklace made by San Francisco-based Sensebridge, reveals to the world with flashing lights when the wearer’s heart races.

Generating emotion in a viewer is a goal of other new technology. Sensory Fiction, an interactive and wearable book-vest combo created as a prototype by four MIT students, will swell, squeeze, or vibrate against the user as he or she flips pages. Readers can literally feel the plot thicken, joining the protagonist on ups and downs throughout the story.

Empathy changes our brains, hence our behavior. Although empathy-enabling technology can provoke solidarity, it may also contribute to manipulating us, or stimulating irrational decision-making. Politicians and advertising agencies have understood this for a long time. Behavioral studies tell us that we are more likely to donate to orphans identified with photos than with silhouettes. We are also far more likely to opt-in to organ donation when asked in-person by a DMV clerk than on a mail-in form. Images, smells, sounds—which can now be conveyed by various wearable technologies—may subtly guide us toward actions that seem to defy logic. When a would-be elected official rouses audiences with stories of “Mary, the retired grandmother of five, who can’t afford her heart medication,” he is playing on voters’ empathy to win votes for a new healthcare policy, regardless of whether Mary is accurately representative of senior citizens.

The “identifiable victim effect” leads us to become more saddened and outraged by news of the kidnapping and torture of a local girl than we would be by news of thousands dead in a far-off land. Neurally, images of victims activate the nucleus accumbens, an area central to the brain’s reward and pleasure circuit. When we understand the gravity and tragedy of a loss of thousands, it is through reason, not generally because of the effects of images, empathy, and nucleus accumbens activation. Brain-imaging technology combined with data analytics is giving researchers more understanding of the neurological and physiological effects of images and stories intended to produce empathy.

Simultaneously, innovation is exploding in several ways that may add further complexity to the empathy/technology nexus. Advances in wearables as well as smaller and cheaper sensors allow weekend tech-warriors to build their own devices that alter our senses, such as Mitch Altman’s “Brain Machine” glasses that use sound and light to stimulate certain brain activity, or the poking machine armband that delivers a physical poke each time your Facebook friends poke you. These are products made mostly for personal use or demonstration, but they show how easy it is to create devices that shape our experience of the world.

The growth of the Quantified Self movement has made it acceptable (in some circles) to wear your digital heart on your sleeve and, soon, products like the Scanadu Scout and Apple’s rumored iWatch, will be able to monitor enthusiasts’ biometrics. It’s not a great leap beyond that to interpreting your emotional state, as the Mood Sweater and Heart Spark are already doing.

The implications of detailed emotional data in business could be far-reaching and extreme. For example, health information coupled with emotional analysis could enable pharmaceutical companies to market drugs in a highly personalized and effective way. Personalized medicine may be beneficial, but imagine ads that can interpret emotions and respond on the fly with targeted messaging. For that matter, any industry with access to such data could fine-tune their advertising accordingly. In mobile gaming, crude emotional targeting is already being attempted, creating personalized and socially networked reward systems. Some argue that games like Candy Crush—today’s top mobile game—are contributing to the demise of gaming by causing millions to spend money on primarily luck-based activities that addict users with the promise of elementary rewards like stars on the screen and social recognition in the game and in social networks. Where will such businesses go as emotional sensing becomes more sophisticated?

The new millenial generation of digital natives frequently shows a greater sense of social responsibility and desire to be connected to one other than previous generations. Many of us want environments, jobs, and products that provide a sense of empathy and fulfillment. Meanwhile, neuroscience, consumer medical devices, and numerous other tools are giving us a deeper understanding of the roots of human sensibility. We may better understand what it means to be human, but the consequences of using these insights are not yet understood. This generation will determine whether we use or abuse empathy.

In the 1987 book, “Amusing Ourselves to Death,” author Neil Postman posited that Aldous Huxley’s “Brave New World” got it right, not George Orwell’s “1984.” “Orwell feared we would become a captive culture. Huxley feared we would become a trivial culture, preoccupied with some equivalent of the feelies, the orgy porgy, and the centrifugal bumblepuppy. Huxley feared that what we love will ruin us.” More recently, Dave Eggers’s novel “The Circle,” in a sort of homage to “Brave New World,” makes a similar point about the potential dangers of services like Facebook and Google.

Technology and society now intersect at empathy in ways the world has never seen before. To prevent ourselves from fulfilling Huxley’s prophecy, we must be aware of empathy’s side effects. Once technologies that can affect empathy become commonplace, we may need more technology to protect ourselves. If we manipulate empathy, we cannot forget how it works in society—to bring people together.

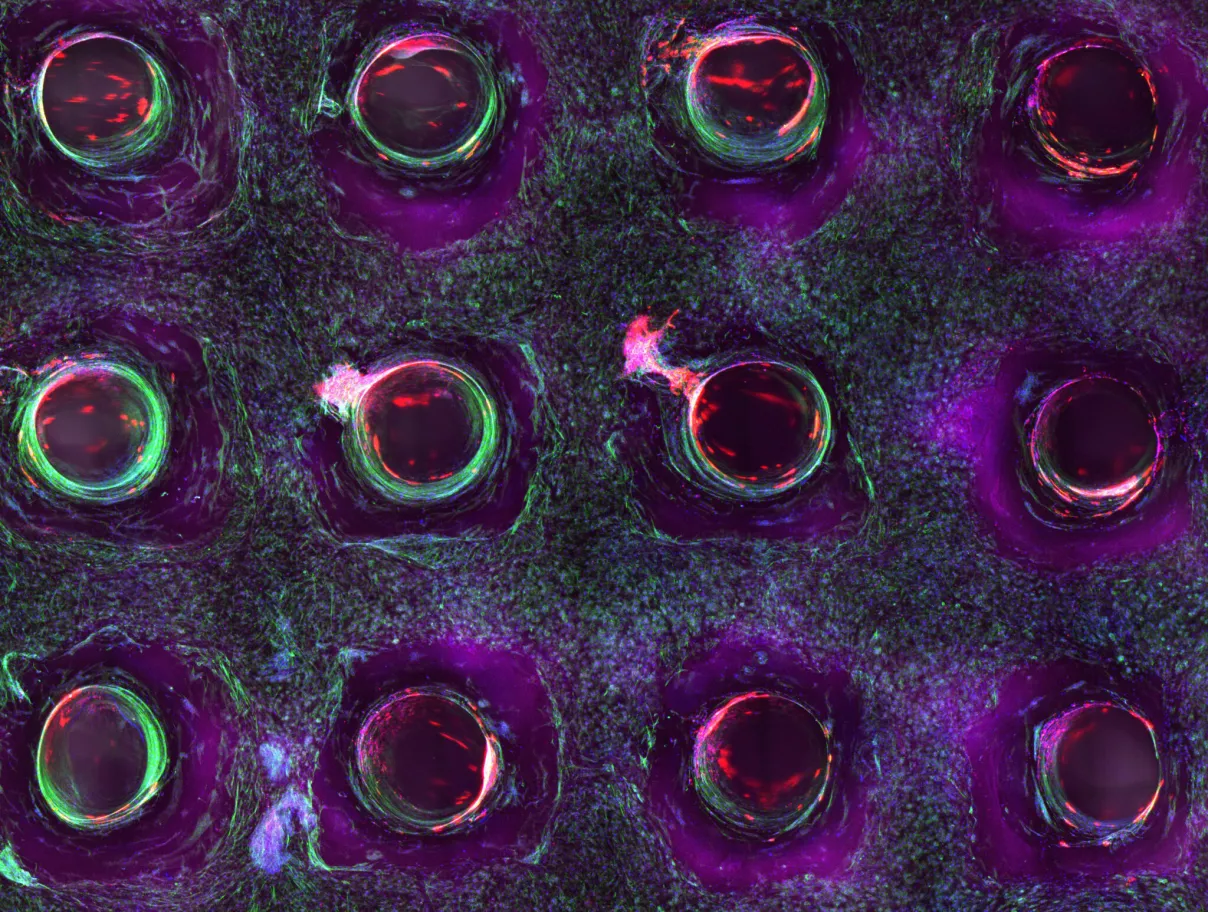

Eri Gentry is an economist turned biotech entrepreneur and an advocate for science literacy. She is a science and technology research manager at the Institute for the Future, an independent research organization, and a co-founder of BioCurious, the first hackerspace for biotech.

This article was originally published in the Techonomy 2014 Report.